Cloud native EDA tools & pre-optimized hardware platforms

Most Recent

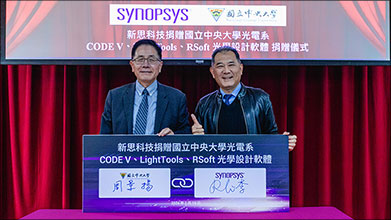

CODE V

Detailed Workflow to Analyze Stray Light in Smartphone Cameras Using CODE V and LightTools

By Optical Solutions Editorial Team

Read More →

Transforming Optical Design Workflows: The Latest Release of CODE V

By Optical Solutions Editorial Team

Read More →

LightTools

Lens Surface Conversion Increases Flexibility for Refining Surface Geometry in LightTools

By Optical Solutions Editorial Team

Read More →

Detailed Workflow to Analyze Stray Light in Smartphone Cameras Using CODE V and LightTools

By Optical Solutions Editorial Team

Read More →

Discover the New LightTools 2023.03: Your Path to More Efficient Illumination Design

By Optical Solutions Editorial Team

Read More →LucidShape

Streamline the Workflow: How Automotive Lighting Designers Can Maximize Productivity with LucidShape

By Optical Solutions Editorial Team

Read More →

4 New Ways to Save Design Time in LucidShape CAA V5 Based

By Optical Solutions Editorial Team

Read More →

Why Photorealistic Simulations are Pivotal in Automotive Lighting Design

By Optical Solutions Editorial Team

Read More →Scattering Measurements

How Can Light Scattering Measurements Improve Aerospace Optical Projects?

By Marion Gaboriau Gil

Read More →

Improve Your AR/VR Design Simulations with Optical Scattering Measurements

By Optical Solutions Editorial Team

Read More →

The Crucial Role of Reflectance and Transmittance Measurements in Optical Design

By Optical Solutions Editorial Team

Read More →